In modern product organizations, prioritization is no longer a subjective exercise driven by loud opinions or short-term pressure. Product Owners and Product Managers are expected to make structured, defensible decisions that balance customer value, business impact, and delivery feasibility. The RICE Matrix has emerged as one of the most practical prioritization frameworks because it transforms qualitative discussions into quantitative comparisons without requiring complex tools or heavy documentation. Its real strength lies in how easily it can be applied mentally, during backlog refinement, roadmap discussions, or even informal stakeholder conversations.

Unlike many strategic frameworks that demand workshops, spreadsheets, or facilitation sessions, the RICE Matrix works at both tactical and strategic levels. A Product Owner can apply RICE while ordering user stories in a sprint backlog, while a Product Manager can use the same logic to compare roadmap initiatives across quarters. This flexibility makes RICE especially valuable in agile environments where decisions must be revisited frequently and adjusted as new information emerges.

What Is the RICE Matrix and Why It Was Created

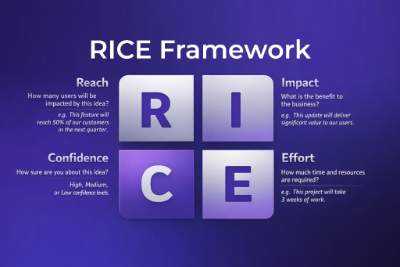

The RICE Matrix is a prioritization framework designed to reduce bias and increase consistency in decision-making. It evaluates initiatives using four factors: Reach, Impact, Confidence, and Effort. Each factor captures a different dimension of value and risk, allowing teams to compare initiatives that may otherwise feel impossible to rank objectively. The framework became popular in product-led organizations because it bridges the gap between intuition and data-informed judgment.

Historically, many product teams relied on intuition, seniority, or urgency to determine priorities. This often resulted in over-investment in highly visible features while neglecting quieter but more valuable improvements. The RICE Matrix addressed this problem by forcing teams to articulate assumptions and make trade-offs explicit. Instead of arguing which feature “feels more important,” teams can compare scores and discuss the underlying assumptions behind them.

Another reason for RICE’s adoption is its compatibility with agile delivery. Agile teams already estimate effort and discuss impact during refinement sessions. RICE simply structures those discussions into a repeatable model that improves alignment across product, engineering, and business stakeholders.

Understanding the RICE Formula in Practice

The RICE score is calculated using the following formula:

(Reach × Impact × Confidence) ÷ Effort

Each component serves a distinct purpose, and understanding how to interpret them correctly is essential to using the framework effectively. The formula intentionally balances upside potential against delivery cost, ensuring that high-effort initiatives must demonstrate proportionally higher value to justify their priority.

What makes this formula powerful is not mathematical precision, but structured thinking. Even when teams do not calculate exact numbers, the act of mentally comparing Reach, Impact, Confidence, and Effort already improves decision quality. For Product Owners working under time pressure, this mental application is often enough to prevent poor prioritization decisions.